Why you should still type code in 2026

Do your eyes glaze over reading your own diffs? Do you feel like you're forgetting how to code?

If so, I have a suggestion for you.

When you type code by hand, you get the best of LLMs without losing your coding skills.

That time I almost didn't learn to code

A year ago, I wrote about the risks of overusing AI1:

In software engineering, over-reliance on LLMs accelerates incompetence. LLMs can't replace human critical thinking.

Let me tell you about a time long before LLMs when I abandoned critical thinking.

In 2009, I began my second year of university. One of my computer science professors allowed his students to pair program on lab assignments. The guy I paired with was a strong programmer. He regularly placed in ACM competitions, so I thought I had won a golden ticket. For the first four labs, his hands were on the keyboard while I watched the screen. I didn't code. I felt like I should, but the longer this arrangement went on, the harder it became to jump in. I became dependent on him.

I felt uncomfortable. There was a mismatch between my goals and choices: I was in school to learn to program, and I wasn't programming.

I decided to do the fifth lab solo. It was tough. Everything I had observed passively up to that point in the course I suddenly had to engage with actively. It was the most stressful week of the semester, but it paid off. I completed the rest of the labs solo and finished the class with an A. Most importantly, I learned C and data structures. Looking back, I think that semester is when I really became a programmer.

The problem is dependence

I became dependent on my lab partner, and experienced devs today are dependent on their coding LLM of choice.

Like my lab partner, it's easy to let LLMs do the work. You can certainly go faster. The more they do for you, however, the more your skills shift from doing the work to managing the thing doing the work. That's a valid skill, but it isn't the one that made me choose this career and stay in it for fifteen years.

LLM providers do not have your benefit in mind. The sales pitch LLM providers have made to your CEO is they can commoditize white-collar labor. The goal is to make you and me as fungible as a line chef or Uber driver.

In keeping with the standard VC-backed SaaS playbook, the current pricing of LLMs is deliberately unsustainable2 in the hope to lock in as many users as possible, on whom they will later jack up prices or reduce quality. This is already happening at Anthropic3 4 5.

One day soon, LLMs could be too expensive for individuals like you and me or blockaded altogether. Some of the latest LLMs are being made available only to a small inner circle6. Do you want a future where the only way you can earn a living is by pulling a lever your employer furnishes?

The fix: type the code

Watching my lab partner code was not enough, reading a diff is not enough, and cognitive science has understood why for fifty years. They call it the generation effect.

The generation effect refers to the finding that subjects who generate information remember the information better than they do material that they simply read.

The science

The 2007 meta-analysis7 quoted above gathered 86 studies on the generation effect. It revealed that generating vs. reading the same information yields just under half a standard deviation (d ≈ 0.40) improvement. Practically, this means the average generator outperforms 66% of readers on memory tests.

Another 2020 meta-analysis8 measuring 126 studies on the generation effect showed that the advantage grows the less "constrained" the task is: the more freedom a learner has in producing an answer, the more they'll remember. For example, you'll learn more by writing a freeform answer than answering multiple choice questions. In coding, this means you're less likely to remember code you pasted or tab-completed vs typed yourself.

The studies above above were on text, not code, but a few subsequent papers have applied similar active-learning mechanisms to programming students with consistent results9 10.

These studies all sit under a broader principle called "desirable difficulties". Learning methods that involve friction and difficulty often produce better long-term retention11. Together, they show the generation effect is robustly replicable across hundreds of studies spanning fifty years.

The application

Code is not exactly prose. Humans read it nonlinearly, yet it's more structured: it's recursive, has a formal grammar, is often type-checkable, and parses into an abstract syntax tree. The principle that generation deepens memory encoding and recall has no obvious reason to stop at those differences.

Remember when we used to type code? For me, that was only about 9 months ago. How medieval it sounds in May 2026! Yet the science suggests you need to type the code yourself.

Your colleague who typed the code in a PR herself will remember more than if she prompted for it. A year later, she might remember specific details, for example an off-by-one error she debugged, that she otherwise might not.

I already refuse to let LLMs write English prose for me. I use it to ideate, but every word you read, my fingers typed. I promise that for several reasons, but above all, because writing makes the writer.

Why wouldn't the generation effect apply to code as well? The act of programming makes the programmer.

What to do right now

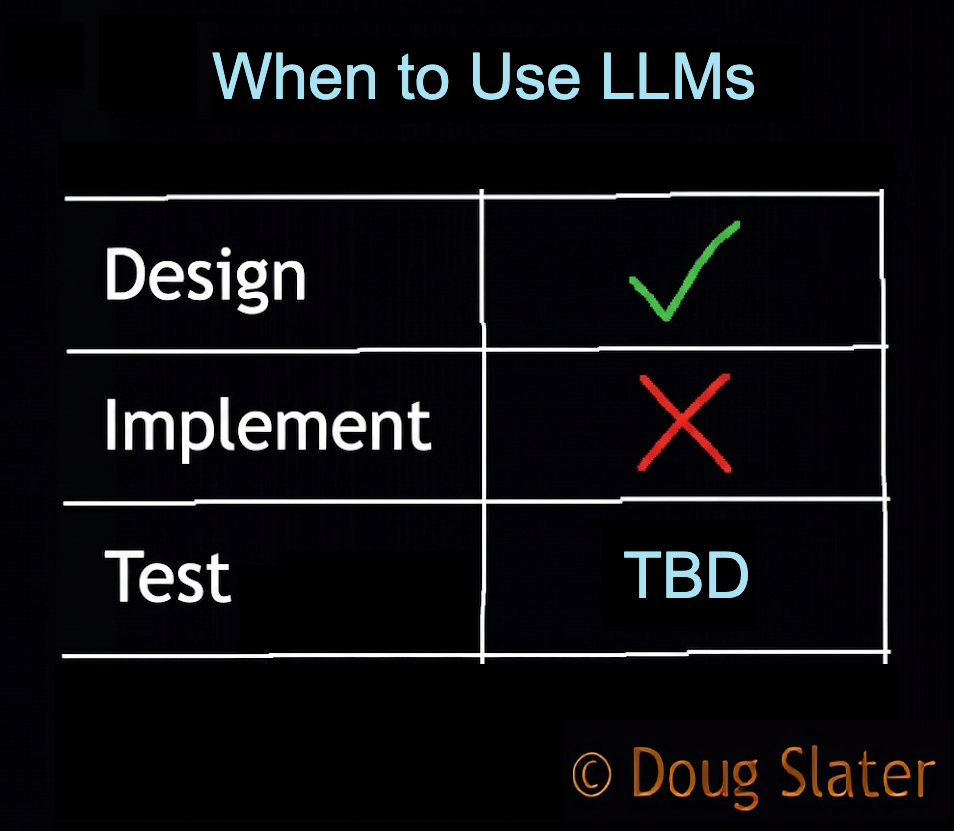

Right now, add this to the end of your CLAUDE.md file, or equivalent for your LLM:

# User will Implement

Once we have finished the design and planning

steps, do not offer to write the code.

You will give me a sequence of specific edits

to make, and I will type the code by hand.

This will enable me to actively participate

in implementation instead of merely reading

a diff, so that I remember what we did.

For your next ticket or feature, engage with your LLM as you normally would to produce a design and implementation plan, but with that plan in hand, make the mechanical edits yourself.

Your eyes reading the instructions and fingers hitting the keys cause the code to pass through your brain, engaging your visual cortex, cerebellum, and motor cortex. If the research is right, you can expect this to improve your knowledge retention compared to merely reading a diff.

You want to go slower now so that later you can go at all.

Objections

Someone will object, "Doug, my employer is measuring our use of AI and my productivity. If I do what you're saying, I'll get fired."

This is where you need to be honest about your values. It's understandable if a paycheck is your top priority. I too have a family to feed, but you should know there is a personal cost. If you value your personal development, it might be time to start looking for a place that understands the concerns I'm writing about.

If anyone in your chain of command will listen, show them the research. Use of AI might not speed you up12. If it does, it is likely at the cost of your skills13 14 15 16 17. It subjects the business to future risk. When you don't understand the code, it is legacy code the instant it merges. Future development will be slower and more bug-prone. The business' dependence on LLMs will compound.

Further reading

If this post clicked with you, I drum a similar beat about LLMs and coding:

- AI: Accelerated Incompetence claims that "in software engineering, over-reliance on LLMs accelerates incompetence."

- LLMs are not Bicycles for the Mind shows that "LLMs are more like E-bikes. More assist makes you go faster, but provides less exercise"

- Who wins in an Arms Race? argues that "in a competitive landscape, LLMs, like supershoes, do not result in your benefit"

References

My posts

Others' posts

- Ed Zitron, "AI's Economics Don't Make Sense" (Where's Your Ed At)

- Anthropic confirmed it has been quietly adjusting (r/claude)

- Heavy Claude Max 5x user here, something changed (r/ClaudeCode)

- Anthropic tested removing Claude Code from the Pro plan (Ars Technica)

- Project Glasswing (Anthropic)

Academic research

- Bertsch, S., Pesta, B. J., Wiscott, R., & McDaniel, M. A. (2007). The generation effect: A meta-analytic review. Memory & Cognition, 35(2), 201–210.

- McCurdy, M. P., Viechtbauer, W., Sklenar, A. M., Frankenstein, A. N., & Leshikar, E. D. (2020). Theories of the generation effect and the impact of generation constraint: A meta-analytic review. Psychonomic Bulletin & Review, 27(6), 1139–1165.

- Margulieux, L. E., Morrison, B. B., & Decker, A. (2020). Reducing withdrawal and failure rates in introductory programming with subgoal labeled worked examples. International Journal of STEM Education, 7, Article 19.

- Hassan, M., & Zilles, C. (2023). On students' usage of tracing for understanding code. In Proceedings of the 54th ACM Technical Symposium on Computer Science Education V. 1 (pp. 129–136). ACM.

- Bjork, E. L., & Bjork, R. A. (2011). Making things hard on yourself, but in a good way: Creating desirable difficulties to enhance learning. In M. A. Gernsbacher, R. W. Pew, L. M. Hough, & J. R. Pomerantz (Eds.), Psychology and the real world: Essays illustrating fundamental contributions to society (pp. 56–64). Worth Publishers.

- Becker, J., Rush, N., Barnes, B., & Rein, D. (2025, July 10). Measuring the impact of early-2025 AI on experienced open-source developer productivity. METR.

- Gerlich, M. (2025). AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies, 15(1), Article 6.

- Singh, A., Taneja, K., Guan, Z., & Ghosh, A. (2025). Protecting human cognition in the age of AI (arXiv:2502.12447). arXiv.

- Lee, H.-P., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The impact of generative AI on critical thinking: Self-reported reductions in cognitive effort and confidence effects from a survey of knowledge workers. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (pp. 1–22). ACM.

- Shen, J. H., & Tamkin, A. (2026). How AI impacts skill formation (arXiv:2601.20245). arXiv.

- Macnamara, B. N., Berber, I., Çavuşoğlu, M. C., Krupinski, E. A., Nallapareddy, N., Nelson, N. E., Smith, P. J., Wilson-Delfosse, A. L., & Ray, S. (2024). Does using artificial intelligence assistance accelerate skill decay and hinder skill development without performers' awareness? Cognitive Research: Principles and Implications, 9(1), Article 46.